I’ve been tracking tech and design shifts long enough to know when something actually matters.

You’re here because keeping up with every new tool, software update, and hardware release feels impossible. And honestly, most of it doesn’t deserve your attention anyway.

Here’s the reality: innovation moves fast but most of what you see is just repackaged hype. The real changes? They’re happening in specific areas that will actually affect how you work.

I spend my time at tech news gfxdigitational separating what’s worth knowing from what’s just noise. We focus on the tools and trends that have real applications for creators and developers.

This article gives you the current state of digital graphics and software development. I’ll show you which updates matter and which ones you can ignore.

We track these changes as they happen. Not weeks later when everyone else is writing about them. That means you’re getting what’s relevant right now.

You’ll see which tools are gaining traction, what hardware shifts are affecting workflows, and where software development is heading next.

No fluff about the future of tech. Just what’s happening today and how it affects your work.

The AI Creative Wave: Generative Tools Go Mainstream

You’ve probably noticed it by now.

AI tools aren’t just tech demos anymore. They’re sitting right inside the software you already use.

Adobe Firefly lives in Photoshop. Canva’s Magic Studio handles your social posts. Midjourney went from Discord bot to production tool faster than most people expected.

Some designers hate this. They say AI cheapens the craft and turns everyone into a “creator” with zero skill. I hear this argument all the time, and I understand where it comes from.

But here’s what that view misses.

These tools aren’t replacing you. They’re doing the grunt work you never wanted to do anyway.

Think about it. How many hours have you spent tweaking background elements or generating variations of the same concept? That’s where text-to-image models actually help.

The real shift happening right now? Text-to-video is getting scary good.

Runway and Pika can turn a sentence into motion graphics. You type “camera pan across a futuristic cityscape at sunset” and get usable footage in minutes. Not perfect footage (the hands still look weird sometimes). But usable.

Then there’s 3D model generation. Tools like Luma AI and Spline let you describe an object and watch it materialize in three dimensions. No more spending days in Blender for a simple product mockup.

Canva vs Adobe is an interesting comparison here. Canva makes AI accessible for non-designers. Their Magic Studio feels like creative training wheels. Adobe Firefly, on the other hand, assumes you know what you’re doing and just need to work faster.

Neither approach is wrong. They serve different people.

What matters is this: the skill that separates good designers from mediocre ones isn’t software mastery anymore. It’s prompt engineering.

Sounds fancy. It’s not.

You’re just learning to communicate what you want clearly. “Make it pop” doesn’t work with AI (it never worked with humans either). But “vibrant sunset colors with high contrast and dramatic shadows” gets you somewhere.

I’ve been testing this stuff for news Gfxdigitational, and the workflow change is real. My ideation phase takes half the time it used to. I generate 20 concept variations before breakfast instead of sketching three by lunch. The recent advancements in Gfxdigitational tools have revolutionized my creative process, allowing me to effortlessly brainstorm and refine my ideas with unprecedented efficiency. The recent surge in Gfxdigitational technology has not only streamlined my creative process but also transformed how I approach game design, allowing me to explore a myriad of concepts with unprecedented speed and efficiency.

Here’s what actually works:

Use AI for rapid iteration. Generate ten versions of your idea in the time it used to take to make one. Then pick the best direction and refine it manually.

Let the tools handle complex assets you’d normally outsource. Need a custom texture pack? Describe it. Want background characters for an illustration? Generate them.

But keep your human judgment. AI gives you options. You still decide what’s good.

The designers winning right now aren’t fighting these tools. They’re using them to do more work at higher quality in less time.

That’s not cheating. That’s just being smart about where you spend your energy.

Hardware Breakthroughs: The Engines Behind Next-Gen Graphics

You know what drives me crazy?

Waiting 45 minutes for a single frame to render while my GPU sounds like it’s preparing for takeoff.

I’ve been there. You’re on a deadline and your machine decides now is the perfect time to choke on a moderately complex scene. Meanwhile, you’re watching that progress bar crawl along at 2% wondering if you should just give up and go make coffee.

Here’s the frustrating part. For years, we were told that better graphics meant bigger price tags. Want real-time ray tracing? Better have a few thousand dollars lying around.

But something shifted.

NVIDIA and AMD finally stopped treating consumer hardware like an afterthought. Their latest architectures actually deliver on the promise of real-time ray tracing without requiring a second mortgage. The tech news gfxdigitational covers shows just how fast this space is moving.

What really changed the game though? Neural Processing Units.

These dedicated AI accelerators (like the ones in Apple’s M-series chips) handle the heavy lifting that used to bog down your CPU. They’re built specifically for the kind of work creatives actually do.

And honestly, the difference is night and day.

Render times that used to eat up your afternoon now finish during a lunch break. 3D applications that stuttered and lagged? They’re smooth now. You can even run serious AI models right on your machine without sending everything to the cloud.

No more sitting around waiting for your hardware to catch up with your ideas.

That’s what these breakthroughs actually mean for us.

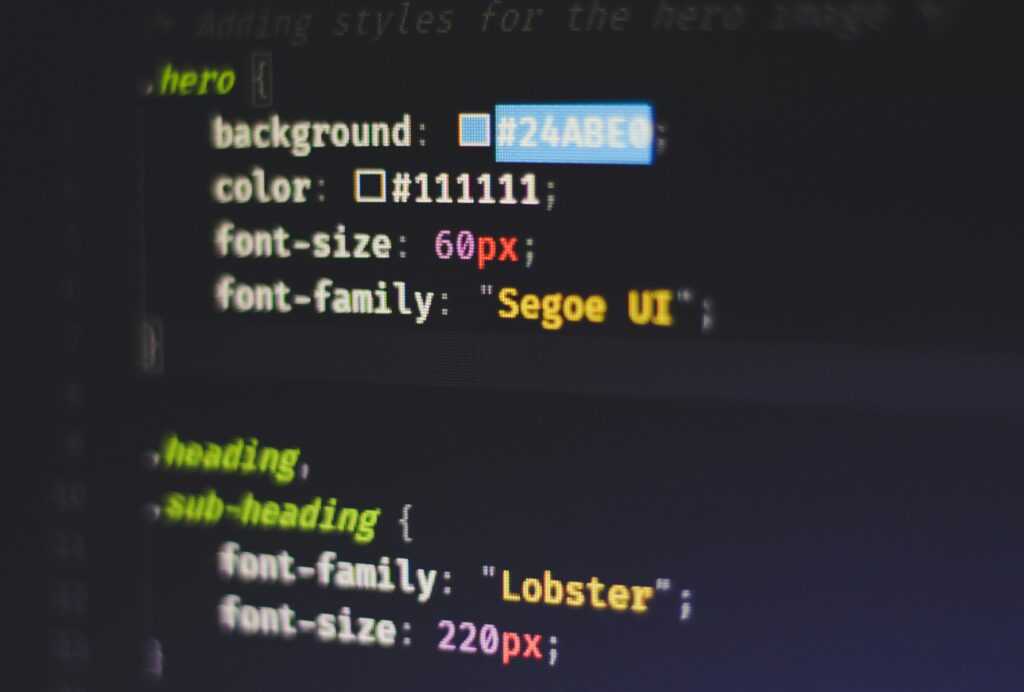

Software and UI/UX: The Shift Toward Immersive and Collaborative Design

You’ve probably noticed something weird happening with design lately.

Flat screens aren’t enough anymore.

I’m watching designers scramble to figure out how to build interfaces for spaces you can walk through. Not just look at. Actually move around in.

The Apple Vision Pro dropped and suddenly everyone’s talking about spatial computing like it’s been here all along. But here’s what most tech News Gfxdigitational outlets won’t tell you. While the buzz surrounding the Apple Vision Pro has ignited conversations about spatial computing, many are overlooking the nuanced implications that only dedicated sources like Technology News Gfxdigitational are diving into. While the excitement around the Apple Vision Pro has certainly heightened interest in spatial computing, it’s essential to look beyond the headlines from Technology News Gfxdigitational to understand the real implications of this emerging technology.

Most designers have NO idea how to design for 3D space.

The Problem With Going Spatial

Sure, Meta Quest 3 and Vision Pro are cool. But designing for them? That’s a completely different skill set.

You can’t just slap a flat interface into a 3D environment and call it done. I’ve seen teams try. It looks terrible and feels worse.

The real shift isn’t about the hardware. It’s about how we think about interaction itself.

Figma added new collaboration features this year. Spline made 3D design accessible to people who’ve never touched Blender. These tools are changing WHO can design for immersive spaces.

But here’s the gap nobody’s filling.

We’re still using design principles from 2010. Tap targets. Scroll depth. Click-through rates.

None of that applies when someone’s using hand gestures in mid-air.

I’ve been testing spatial interfaces in O’Fallon, and what works is counterintuitive. Bigger isn’t always better. Closer isn’t always more important. Your peripheral vision matters as much as your focal point.

The designers who get this right? They’re not just learning new software.

They’re unlearning EVERYTHING they know about screens.

Emerging Tech on the Horizon: What to Watch Next

WebGPU is about to change what we can do in a browser.

Think of it as giving web apps the same graphics power your computer uses for games. No downloads. No installs. Just open a tab and run software that used to need serious hardware.

Developers can finally build complex 3D apps that run smoothly right in Chrome or Firefox. We’re talking CAD programs, advanced simulations, and games that don’t feel like watered-down versions.

Real-time engines are showing up everywhere now.

Unreal Engine 5 isn’t just for video games anymore. Film studios use it for virtual production (you know, like how The Mandalorian was shot). Car companies design vehicles in it. Architects walk clients through buildings that don’t exist yet.

The line between game development and professional visualization? It’s basically gone.

Here’s what makes this interesting. These tools used to cost a fortune and require specialized training. Now a small design firm in O’Fallon can use the same engine as a Hollywood studio. I cover this topic extensively in Software Tools Gfxdigitational.

Open-source AI changed the game completely.

A year ago, cutting-edge AI models were locked behind corporate walls. Now? Developers download powerful models for free and run them locally.

Small companies don’t need million-dollar budgets to experiment with AI anymore. They just need decent hardware and time to learn.

This shift shows up in the technology news gfxdigitational covers regularly. The barrier to entry keeps dropping while the tech gets better.

What does this mean for you?

If you’re building anything digital, you have access to tools that didn’t exist outside of major tech companies just a few years ago. The question isn’t whether you can afford the tech anymore. In this era of unprecedented accessibility, even indie developers can harness Gfxdigitational tools to create stunning visuals that rival those produced by industry giants. In this remarkable age of digital creation, indie developers are empowered like never before, utilizing Gfxdigitational resources to craft immersive experiences that were once the exclusive domain of industry giants.

It’s whether you’ll actually use it.

Your Roadmap for the Future of Digital Creation

You now understand the key developments shaping digital creation.

AI-driven creative tools are getting smarter. Hardware is more powerful than ever. Software design is moving toward immersive experiences that change how we work.

I know keeping pace with technology feels like a constant challenge. The moment you master one tool, three new ones appear.

But here’s the thing: you don’t need to chase everything.

Focusing on these core trends gives you a framework. You can filter out the noise and zero in on changes that actually matter for your work.

Now it’s time to get hands-on.

Pick one AI tool you’ve been curious about and test it on a real project. Or research that hardware upgrade you’ve been putting off. See how it transforms your creative process.

The future of digital creation isn’t some distant concept. It’s happening right now in the tools and tech you choose to adopt.

tech news gfxdigitational tracks these developments so you can stay informed without the overwhelm. We show you what’s worth your attention and what you can skip.

Your next step is simple: experiment with something new this week.

Zelphia Ollvain has opinions about digital tech news. Informed ones, backed by real experience — but opinions nonetheless, and they doesn't try to disguise them as neutral observation. They thinks a lot of what gets written about Digital Tech News, Practical Tech Tutorials, Graphic Design Innovations is either too cautious to be useful or too confident to be credible, and they's work tends to sit deliberately in the space between those two failure modes.

Reading Zelphia's pieces, you get the sense of someone who has thought about this stuff seriously and arrived at actual conclusions — not just collected a range of perspectives and declined to pick one. That can be uncomfortable when they lands on something you disagree with. It's also why the writing is worth engaging with. Zelphia isn't interested in telling people what they want to hear. They is interested in telling them what they actually thinks, with enough reasoning behind it that you can push back if you want to. That kind of intellectual honesty is rarer than it should be.

What Zelphia is best at is the moment when a familiar topic reveals something unexpected — when the conventional wisdom turns out to be slightly off, or when a small shift in framing changes everything. They finds those moments consistently, which is why they's work tends to generate real discussion rather than just passive agreement.

Zelphia Ollvain has opinions about digital tech news. Informed ones, backed by real experience — but opinions nonetheless, and they doesn't try to disguise them as neutral observation. They thinks a lot of what gets written about Digital Tech News, Practical Tech Tutorials, Graphic Design Innovations is either too cautious to be useful or too confident to be credible, and they's work tends to sit deliberately in the space between those two failure modes.

Reading Zelphia's pieces, you get the sense of someone who has thought about this stuff seriously and arrived at actual conclusions — not just collected a range of perspectives and declined to pick one. That can be uncomfortable when they lands on something you disagree with. It's also why the writing is worth engaging with. Zelphia isn't interested in telling people what they want to hear. They is interested in telling them what they actually thinks, with enough reasoning behind it that you can push back if you want to. That kind of intellectual honesty is rarer than it should be.

What Zelphia is best at is the moment when a familiar topic reveals something unexpected — when the conventional wisdom turns out to be slightly off, or when a small shift in framing changes everything. They finds those moments consistently, which is why they's work tends to generate real discussion rather than just passive agreement.